A Propensity for Mistakes

I have had this little text lying around for a while. It was written as much as a way to force myself to articulate this, as for as for anyone to read. Then I thought I might as well publish it. Maybe this has no merit, or maybe it has already been written. Here it is regardless.

One of the challenges of doing generative work is how to get more interesting results. The algorithms you write will do what you design them to do. You might not fully understand all their aspects, but even so: the algorithms are not creative, and they do not improvise.

So what is a possible strategy for generating more interesting results? Here are three components that I think are needed.

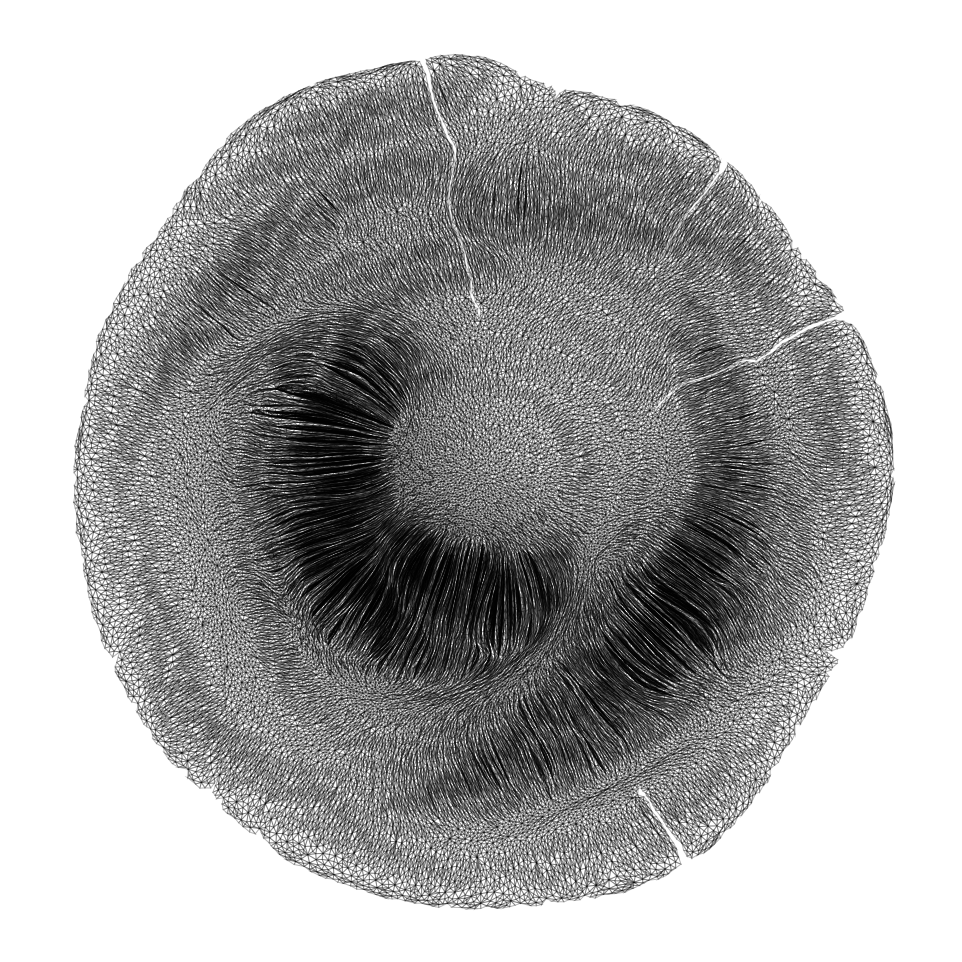

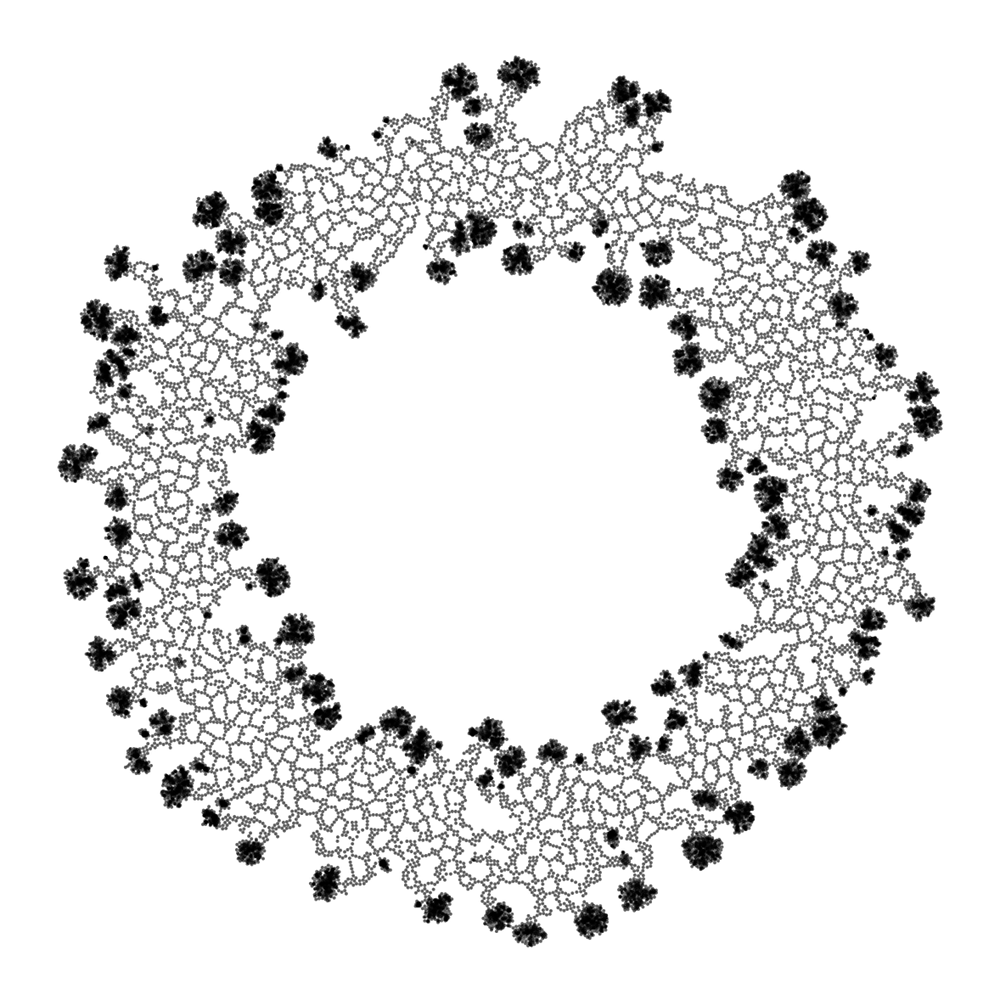

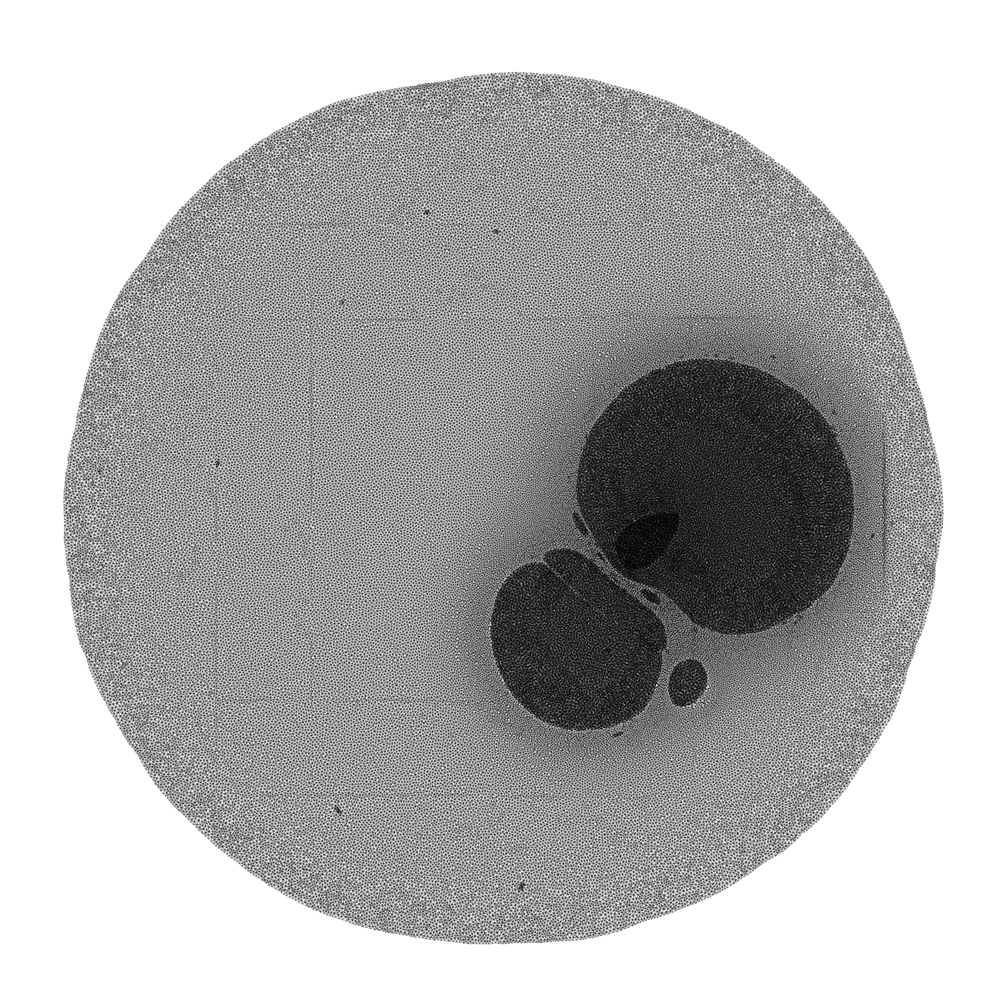

First you need a set of rules. These rules should be able to create structures similar to what you want your system to create. I tend to work with geometry, so to me this would be rules that can generate geometric structures; meshes, splines or other kinds of shapes. This was partly why I started working on SNEK.

After that you need a way to introduce variation. I often find that my programming mistakes yield interesting results. Hence I think that one possible strategy is to introduce errors into the rules of your system. There are two obvious ways of doing this. One is to try to find a way to introduce errors as you apply a given rule. Another is to have a separate stage in your system that messes with your data structure somehow.

Finally, since you probably want the system to work over time. You need to give the system a way to correct itself to some extent. Depending on what data structures are involved you will need to maintain some level of integrity.

Obviously none of the suggestions above tell you how to encode and implement this. Unless anyone can find a good way to do that then these suggestions are pretty worthless. However, I have a certain hope that someone will be able to do (at least some of) this eventually. Maybe someone has already?

There is a lot of talk about Neural Networks at the moment, and I think perhaps this is a possible place to start. My limited understanding of how GANs work makes me think that to some extent they do this already.

If you can find a way to encode these properties, then you are only left with the final challenge of deciding what interesting actually means.

- Whatever interesting means is a big discussion alone, let us ignore that for now. I touched a little on this when I wrote about my twitter bots.

- A favorite idea if mine is to introduce actual programming mistakes, but I don't really know how you would go about doing that in practice.