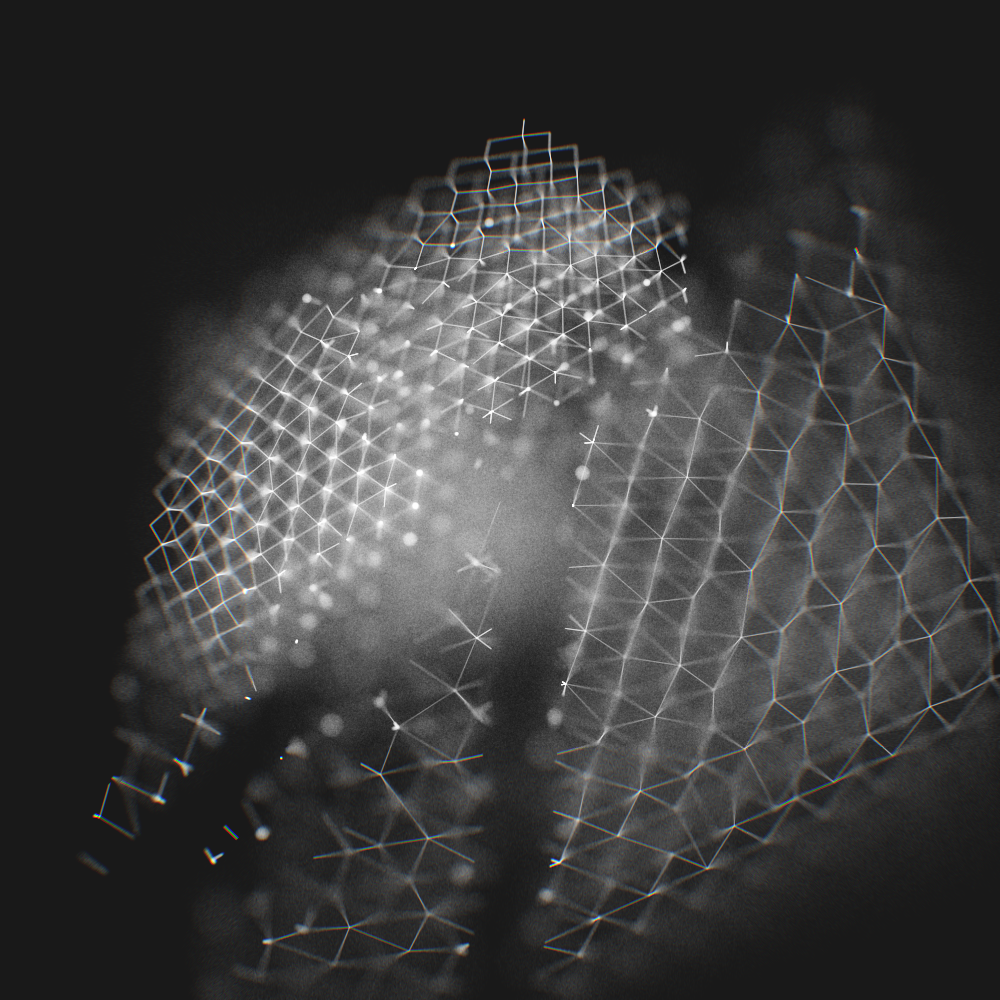

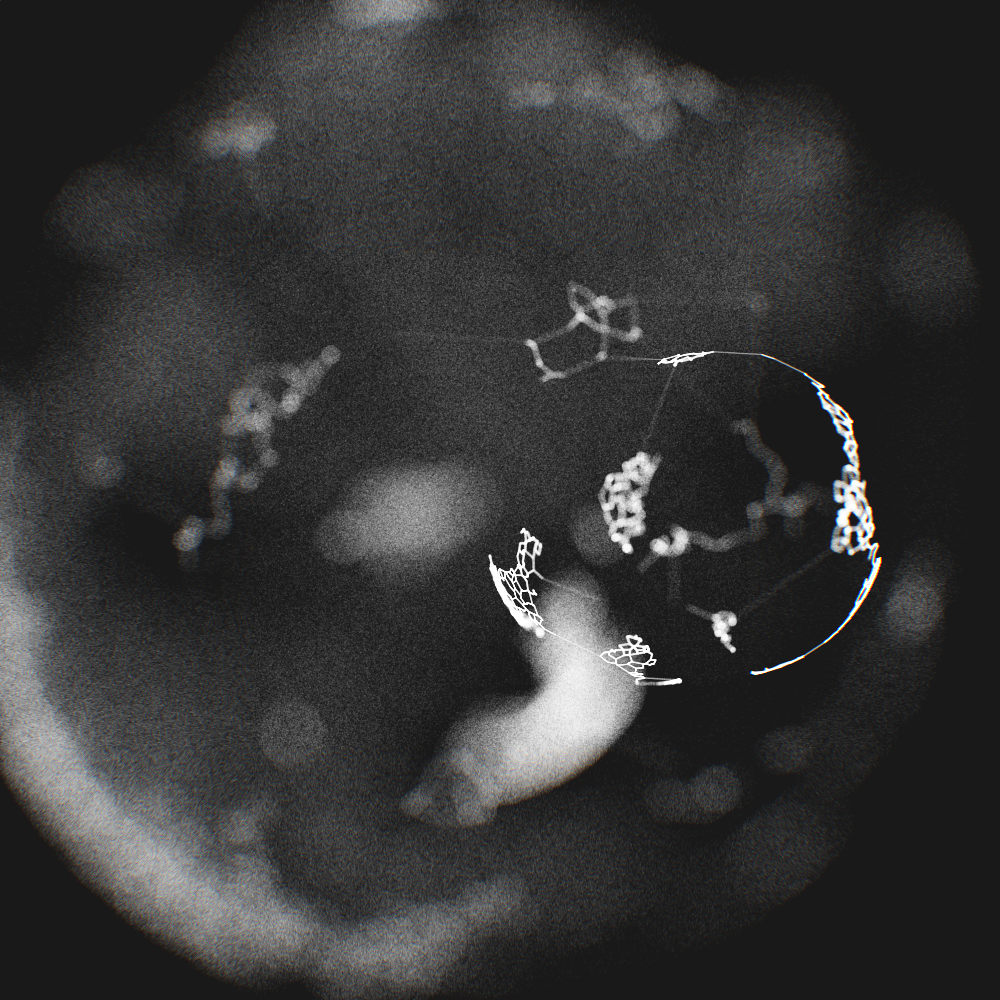

The 3D webs in the previous post are represented as graphs. Rendering them in 3D presents a few challenges. Depending on what tools you use, you might be able to convert them into meshes. There are several other ways to do it as well.

The following is the outline of the method used to render the webs, as well as the images you see here, that does not involve building meshes. It should work for a wide range of graph structures, and probably other things as well.

- A line in 3D,

l=[a, b]; - a camera at position,

c; - a focus distance from camera,

f - a distance function,

dst(a, b)that yields the distance betweenaandb; - a function,

lerp(a, b, s) = a + s*(b-a), which interpolates betweenaandb; - a function,

rnd(), that yields random numbers between0and1; and - a function,

rndSphere(r), that returns random points inside a sphere with radius,r.

With the previous definitions in mind, you can draw all the lines,

l, in your graph structure using the following method:

- Select a point,

v = lerp(a, b, rnd()), onl. - Calculate the distance,

d = dst(v, c). - Find the sample radius,

r = m * pow(abs(f - d), e). - find the new position,

w = v + rndSphere(r). - Project

winto 2D, and draw a pixel/dot. - Sample a fixed number of times, or according to the length of

l. Use a low alpha value, and a high number of samples for a smooth result.

Here m is a parameter that adjusts the size of the depth of

field sphere (the "Circle of Confusion"), and e adjusts the

distribution of the samples inside the sampling sphere. Start by setting

both to numbers close to 1, then adjust them and see what

happens.

If you try this you will probably notice that there are a lot of other things to tweak as well. At least this should get you started.

One thing to keep in mind is that this method doesn't really require you

to work in 3D space. The sampling might as well happen in 2D, and the

sampling radius, r, described above, could be any number

different functions.

Read the next post to see how to add a colour shift effect as well.

- An extension of the method described in this earlier post.

- I use the word "render" in a rather lose sense here. I have by no means built a 3D pipeline. Just a few tools that can do 3D to 2D projection, and that (rather inefficiently) draw raster images.

- All of this assumes world coordinates. How that affects you depends

on how you project your 3D points into 2D. Moreover, since my

projection function uses orthographic projection, the distance function

does not calculate the distance from

ptoc. Instead it calculates the distance frompto the point wherephits the camera plane.